Ayush Parchure

Content Writer, Flexprice

How to choose the right pricing metric for your AI product

Generally, most of the AI companies don’t end up with a single pricing metric.

They want to test a few and see where margins break, and adjust once real usage data starts coming in.

The key question isn’t “which metric is best?” but which metric reflects how customers actually get value from your product. The section below highlights how each model tends to work.

When token-based pricing works best

Token pricing works when the core value of your product is directly tied to how much the model processes.

In these cases, tokens are the closest proxy for both compute cost and product usage, so charging on tokens keeps revenue aligned with infrastructure spend.

Token pricing usually works well when:

Your product is LLM-heavy (generation, summarization, RAG queries, chat APIs)

Your users are developers or technical teams comfortable with token concepts

You want pricing to track the model cost directly

Usage can vary dramatically across customers

Your product behaves more like an AI infrastructure than an end-user app

When outcome-based pricing makes sense

Outcome pricing works when customers care about the result, not the computation required to produce it. Instead of charging for model activity, you charge when the AI successfully completes a meaningful unit of work.

Outcome-based pricing works best when:

You can measure clear task completions

The value of the result is obvious to the customer

The outcome can be consistently detected by the product

The task has a clear start and finish

Customers prefer paying for results rather than usage

Examples of Outcome-based pricing are:

invoice processed

support ticket resolved

document summarized

lead qualified

When credit-based pricing fits

Credit systems abstract away the underlying usage units and bundle them into a simpler currency that customers can spend across features.

Instead of thinking about tokens, API calls, or GPU time, customers just buy credits and consume them as they use the product.

Credit-based pricing works well when:

Your product meters multiple types of usage

Different features consume different resources

Your buyers are non-technical teams

You want simpler pricing pages

You want prepaid revenue with flexible usage

When hybrid models outperform single metrics

Most mature AI companies eventually combine multiple pricing layers. A single metric rarely balances simplicity, predictability, and cost coverage on its own.

A common structure looks like this:

Base subscription for product access

Seats or workspace pricing for collaboration

Usage allowance included in the plan

Overage charges for additional usage

This approach gives customers predictable starting costs while still allowing revenue to scale with usage. A typical hybrid model might look like:

Pricing Layer | What It Covers | How It’s Typically Measured | Why It Exists |

Base subscription | Access to the platform, core features, dashboards, and integrations | Monthly or annual plan fee | Creates a predictable baseline revenue and keeps pricing simple for new customers |

Seats/workspace access | Number of users collaborating on the product | Per user or per workspace | Scales with team adoption and reflects collaboration value |

Included usage allowance | Initial AI capacity bundled with the plan | Tokens, tasks, compute time, or credits are included monthly | Reduces billing anxiety and helps customers get started without worrying about usage |

Overage consumption | Additional AI workloads beyond the included allowance | Tokens, GPU seconds, API calls, workflows executed | Ensures revenue grows with infrastructure usage and prevents heavy users from eroding margins |

Many AI developer platforms follow this pattern: a base plan plus usage-based compute or token billing once the included allowance is exceeded.

How to balance revenue predictability with customer growth

AI pricing always sits between two competing needs. Finance teams want predictable revenue they can forecast and report. Customers want the freedom to scale usage without committing to large fixed contracts.

The challenge is building a pricing structure that gives the business stable revenue while still letting customers adopt the product gradually.

Why finance teams need predictable revenue

Finance teams rely on recurring revenue metrics like ARR and MRR to plan budgets, forecast growth, and communicate performance to investors.

When revenue comes entirely from usage, it can fluctuate heavily from month to month depending on how customers run AI workloads. That volatility makes forecasting difficult and introduces uncertainty in financial planning.

Pure usage models often create problems like:

Revenue fluctuates based on customer workloads each month

Forecasting future growth becomes difficult

Investor reporting becomes less predictable

Budget planning for hiring and infrastructure becomes harder

Finance teams struggle to estimate long-term revenue

Because of this, many companies prefer pricing models that include some form of committed revenue.

Why customers need flexible pricing

Customers usually approach AI products very differently. Most teams want to start small, experiment with the product, and scale usage only after they see value.

Fixed contracts or large upfront commitments create friction during this early adoption phase.

Flexible pricing helps customers grow into the product over time.

Customers usually prefer pricing models where:

They can start with a small initial commitment

Spending increases only as usage grows

They can experiment with AI features without a large risk

Costs scale naturally as their workloads scale

They are not locked into long-term contracts early

Pricing models that force large commitments too early often slow down product adoption.

How credits bridge predictability and flexibility

Prepaid credit models are one way many AI companies balance these two needs. Customers purchase credits upfront, which creates predictable, committed revenue for the business. They then consume those credits gradually as they use the product, allowing spending to scale with real usage.

In practice, credit systems provide several advantages:

Revenue becomes more predictable because credits are purchased upfront

Customers can allocate a clear usage budget internally

Multiple usage types can be bundled into one pricing system

Customers maintain flexibility in how they consume the product

Additional usage can trigger credit top-ups instead of contract renegotiation

Modern enterprise billing platforms like Flexprice make this possible by supporting credit wallets, balance tracking, expiration policies, and automated overage rules, allowing companies to maintain predictable revenue while still offering flexible usage-based pricing.

How to add guardrails that prevent bill shock

Usage-based pricing works best when customers feel they are in control of their spending. Without clear guardrails, sudden usage spikes can lead to large and unexpected invoices.

Practical safeguards help customers monitor usage early and prevent runaway costs before they become a billing issue.

Spend caps and real-time alerts

Hard caps

Block usage once a predefined spending limit is reached, so no additional charges occur

Soft caps

Notify customers when a threshold is crossed while allowing usage to continue

Tiered alerts

Send notifications at multiple thresholds, such as 50%, 75%, and 90% of the allowed spend

Admin notifications

Alert both the user and account owner when usage rises quickly

Automatic throttling

Temporarily slow or limit workloads when usage approaches the cap

Prepaid credits with overage rules

Prepaid balance control

Usage is deducted from a fixed credit balance purchased upfront

Usage stops at zero balance

Consumption pauses when the wallet runs out of credits

Automatic credit top-ups

Accounts can automatically purchase additional credits when balances are depleted

Low-balance alerts

Customers receive notifications when credits are about to run out

Overage rules

You can allow usage beyond the credit balance or require a top-up before continuing

Customer-facing usage dashboards

Real-time usage tracking

Customers can see consumption as it happens

Credit balance visibility

Clear view of remaining credits or usage allowance

Projected monthly spend

Estimate of total spend based on current usage patterns

Detailed usage breakdowns

Visibility by feature, workflow, or API key

Historical billing records

Past usage and invoices are available for review

Clear visibility allows customers to adjust usage early, reducing billing surprises and lowering support requests related to unexpected charges.

How to test pricing metrics without engineering tickets

Changing pricing used to require engineering work. Every experiment meant new code, testing cycles, and deployment risk. That makes teams hesitant to adjust pricing even when the current model clearly isn’t working. Modern billing infrastructure removes that bottleneck by letting product and finance teams experiment with pricing logic directly, without waiting on engineering.

Sandbox environments for safe experimentation

A sandbox environment lets you test pricing changes without touching real customer data or invoices. Teams can simulate usage, run billing calculations, and confirm that invoices behave exactly as expected before rolling anything out to production.

You can think of it as a flight simulator for pricing. Pilots train on simulators before flying a real aircraft because mistakes in a simulator are safe. Pricing experiments work the same way. You can simulate different workloads, check invoice outcomes, and adjust rules until everything behaves correctly.

Migrating customers between plans without manual work

Pricing experiments often require moving customers between plans. If that process requires spreadsheets or manual billing adjustments, experimentation quickly becomes painful and error-prone.

A flexible billing system handles these transitions automatically. It calculates prorated charges, updates plan entitlements, and moves customers from one pricing structure to another without disrupting their usage.

Changing pricing logic without code deploys

In many companies, pricing logic lives directly inside the product code. Any change requires engineering time, QA checks, and deployment cycles. This makes even small adjustments slow.

Configuration-driven billing platforms separate pricing rules from the application itself.

Product or finance teams can update plans, modify usage rules, or launch promotions through a dashboard or API without touching product code.

It is a pure example of how CMS works, where you can edit a website without rewriting your HTML every time. Pricing infrastructure built this way lets teams change billing logic just as quickly.

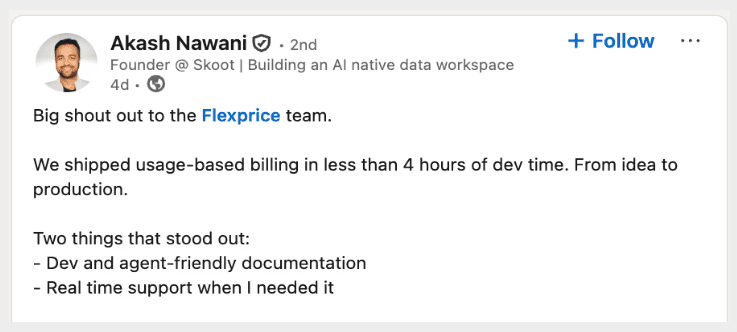

See how Flexprice acts as a programmable billing layer between your product and your payment gateway. And not just that, they also help teams to integrate Flexpice in just 4 hours of dev time.

Why the billing infrastructure determines whether value-aligned pricing is possible

AI teams spend weeks debating on the right pricing metric, whether we should use tokens, tasks, workflow, or outcome. But the real constraint usually isn’t the idea. It’s the infrastructure behind it.

If your billing system can’t measure usage accurately, handle credits, or combine multiple pricing models, the pricing strategy never makes it to production. Practically, pricing is limited by what your billing stack can actually support.

Think of it like designing a high-performance car but installing a weak engine. The design may be great on paper, but the car will never perform the way it was intended. Pricing works the same way. A strong pricing strategy only works if the billing infrastructure underneath can support it.

Flexprice is an enterprise-level tool that is built around this approach. It allows teams to implement value-aligned pricing without building custom billing infrastructure.

With Flexprice, teams can:

meter tokens, API calls, compute time, tasks, or any custom usage metric in real time

manage credit wallets with prepaid balances, expirations, and rollover rules

support hybrid pricing models that combine subscriptions, usage, and credits

launch pricing changes quickly without large engineering projects

The result is that pricing stops being something that takes months to implement. Teams can experiment with new models, adjust pricing as the product evolves, and align revenue with the real value customers get from the product.

To learn more about this, visit Flexprice.io

Why don't per-seat or flat-rate pricing models work for AI products?

How does token-based pricing capture the value of an AI product?

What is the difference between charging for AI usage and charging for AI outcomes?

How do you choose the right pricing metric for an AI product?

Why do many AI companies combine multiple pricing metrics instead of using just one?