Ayush Parchure

Content Writer, Flexprice

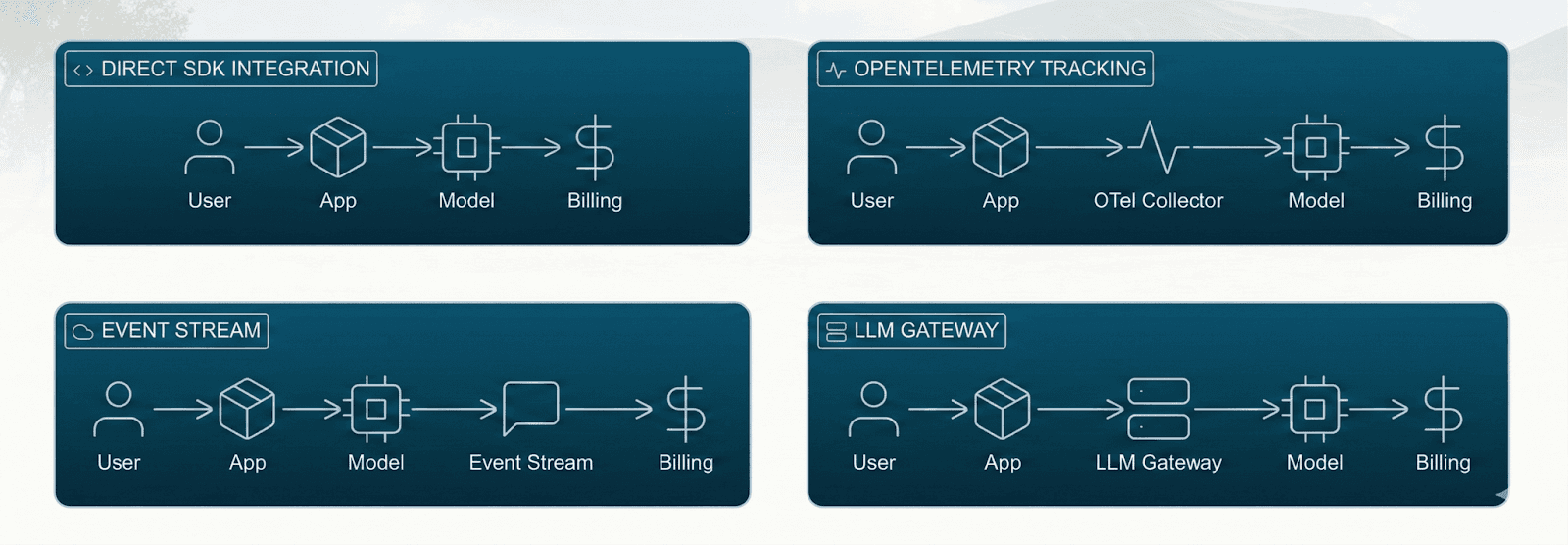

How Flexprice meters LLM token usage for billing

Flexprice is an enterprise-grade billing infrastructure designed for usage-based and credit-based pricing. AI products can meter LLM token usage by sending token consumption as usage events, which Flexprice aggregates, prices, and bills in real time.

Token metering as a usage metric

Flexprice treats tokens as a custom usage metric. AI products can define a metered feature such as model usage and send token consumption as usage events. This means the tokens your models process become the billing signal, similar to how other products meter API calls, storage, or compute hours.

Both input and output tokens count because that is how most LLM providers price their services.

Flexprice does not calculate token counts itself. The token usage is already known by your application after the model call completes. Your backend or client captures the usage metadata returned by the provider and sends it to Flexprice as part of a usage event.

Your application sends token usage as structured usage events

Events typically include fields such as tokens_in, tokens_out, or total_tokens, along with identifiers like customer_id, feature, or model. These fields are defined by your product’s instrumentation.

The billing system records these events as metered consumption

Each event is associated with a customer and a pricing meter

Once Flexprice receives the event, it measures the usage and applies the pricing logic defined in your billing configuration. Tokens become a first-class billing unit inside the system rather than something developers manually summarize later.

This helps in removing a common operational problem. Many teams initially store token usage in logs or analytics tools and try to reconstruct billing data later. That approach requires reconciliation, backfills, and manual aggregation. By treating tokens as a metered unit from the start, your usage flows directly into billing without an extra translation step.

Event ingestion

Flexprice receives token usage through structured usage events that your application emits in real time. Each event represents a unit of consumption that the billing engine can meter and price.

Instead of reconstructing usage later from logs or analytics pipelines, your system sends the data as it happens. This ensures billing always reflects actual activity inside the product.

Each event typically includes a set of fields that describe both the usage and the context in which it happened.

Token counts

These fields capture the actual model usage. Input tokens represent the prompt and context sent to the model, while output tokens represent the generated response. Together, they define the total consumption that will be billed.

Customer or tenant ID

This helps in identifying which account generated the usage. The billing system uses this field to attribute every event to the correct customer, workspace, or tenant so that usage aggregates correctly at the account level.

Plan or subscription reference

This links the event to the active pricing plan. It allows the billing system to apply the correct pricing rules, quotas, or credit balances associated with that customer’s subscription.

Feature tag

Feature tags help categorize usage within the product. This makes it possible to price different product features separately or analyze which parts of the product drive the most token consumption.

Model name

Different models often have different cost structures. Including the model name ensures the billing system can apply model-specific pricing or adjust rates if underlying provider costs change.

Additional metadata used for pricing logic

Extra fields such as region, environment, agent type, or request type can also be attached. These attributes allow pricing rules to reference more context if needed.

Flexprice does not infer token usage from requests or logs. It simply processes the structured usage data that your system sends. Because events contain both usage and context, the billing system can apply flexible pricing rules that depend on properties such as model, feature, or environment.

Aggregation and rating

When usage events finally enter the system, Flexprice starts processing them in two stages. First, it measures what happened, and then it determines how much exactly the usage should cost.

Then the system groups them over defined time windows and applies pricing rules based on your product catalog.

Aggregation

Aggregation answers a simple question: how much usage happened during a given billing period.

Here, Flexprice collects all incoming events and summarizes them across a defined time window, such as hourly, daily, or monthly. The goal here is to calculate usage quantities before any pricing is applied to them.

Total tokens per customer

The system groups all token events by customer or tenant and calculates the total tokens consumed during the billing window.

Tokens grouped by model or feature

Usage can also be grouped by attributes like model name or product feature. This makes it possible to see which models or capabilities generate the most consumption.

Combined or derived metrics

Input and output tokens can be combined to calculate total tokens. Other derived metrics can also be computed depending on how usage is structured.

At this stage, the system is only measuring usage. Aggregation determines quantities but does not assign any monetary value.

Rating

Rating answers your next question: what should that usage actually cost?

After usage is aggregated, Flexprice applies the pricing rules defined in your product catalog. These rules convert usage quantities into charges or credit deductions.

Fixed price per 1,000 tokens

A simple rate where each block of tokens has a consistent price regardless of volume.

Tiered or volume-based pricing

The price per token can change as usage grows, allowing discounts at higher consumption levels.

Different prices for input and output tokens

Some products price input tokens and output tokens differently to reflect underlying provider costs.

Customer-specific overrides

Enterprise contracts can override standard pricing for specific customers or negotiated deals.

Model-based pricing

Usage from different models can be priced separately since model costs often vary significantly.

Rating converts usage quantities into actual billing outcomes. This results in monetary charges, credit deductions from a wallet balance, or a combination of both. Aggregation measures how much usage happened. Rating determines what that usage is worth.

Credits and wallet integration

Once usage has been rated, the system knows the monetary value of that consumption. The next question in line is how that value should be settled. This is where credit wallets come into the picture. Pricing determines how much something costs, while the wallet determines how that cost is paid.

A credit wallet acts as a balance that usage charges can draw from. Instead of billing only at the end of the month, customers can prepay and consume credits as they use the product.

Customers can prepay for token credits

Organizations can add funds to their wallet in advance. These funds represent credits that will be used to pay for future token usage.

Rated usage deducts from the wallet balance

When token events are rated and converted into charges, the corresponding value is deducted from the customer’s wallet balance.

Automated balance management rules

You can configure behaviors such as automatic top-ups, credit expiration rules, or minimum balance alerts to prevent usage interruptions.

Wallet balances update as rated usage is processed. Depending on how billing is configured, invoices can still be generated separately for reporting, reconciliation, or enterprise billing workflows.

Flexibility for AI and LLM pricing patterns

In the current scenario, AI products rarely follow a single and simple pricing structure. Different models have different costs. Some features consume far more tokens than others. Pricing often needs to reflect these differences while remaining understandable for customers.

Flexprice pricing logic can reference properties inside usage events; it becomes possible to build pricing models that match how LLM systems actually behave.

Different rates for different model families

Usage from one model can be priced differently from another, which helps maintain margins when model costs vary.

Hybrid subscription and usage pricing

A base subscription can provide platform access while token consumption is billed as variable usage on top.

Model or feature-based pricing

Pricing rules can reference attributes such as model name, feature type, or request category. This allows different rates to apply depending on which model or capability generated the usage.

This flexibility allows companies to price based on real token consumption while still aligning their pricing with upstream model costs.

Integration with billing, invoices, and external systems

After usage has been aggregated and rated, the resulting charges become part of the customer’s billing record. These charges are then included in the billing invoice workflow according to the subscription or contract configuration.

The billing system handles the logic that connects usage, pricing, and invoicing.

Charges attach to the subscription record

Rated usage is recorded against the customer’s subscription or billing account.

Invoices are generated on the billing schedule

Charges are included in invoices that follow the configured billing cycle, such as monthly or quarterly.

External payment systems execute payments

External payment processors such as Stripe, Razorpay, or other integrations can handle payment collection once invoices are issued.

Flexprice manages the billing logic that turns usage into charges. Payment providers handle the transaction side of collecting funds.

Common mistakes to avoid when metering LLM tokens

One thing you would have understood by now is that, as you start metering tokens.

The basic feels straightforward, like count usage, then store it, and after that bill on it. But one thing you miss out is that problems show up later.

Here are some common mistakes you should avoid while metering LLM tokens:

Only metering output tokens

It is tempting to track just the completion tokens because they feel like the visible output. But providers charge for both input and output.

Providers bill for input plus output tokens

System prompts and RAG context inflate input cost quickly

Billing only output leads to a silent margin loss

Always track input_tokens, output_tokens, and total_tokens

If you ignore input tokens, your costs rise quietly while your revenue stays flat.

Sending aggregated monthly totals

Some teams send a single number at the end of the month. It may look clean to you, but it is the main reason behind long-term rigidity.

Monthly totals remove model-level pricing flexibility

You lose feature-level breakdowns

No real-time wallet deduction or debugging clarity

Send granular usage events and aggregate later

When you only send 4.2 million tokens as a lump sum, you cannot answer basic questions about where that usage came from.

Not tagging the model in usage events

Models are not interchangeable from a pricing perspective. Costs may vary, and they often change.

Different models have different cost structures

Model pricing changes frequently

Without metadata, you cannot adjust pricing later

Always include model, feature, customer_id, and environment

If you skip metadata today, future pricing updates become painful migrations instead of simple config changes.

Ignoring retries and failed calls

Agents retry more than most teams expect. Especially under load or with tool failures.

You may pay for three attempts, but be billed for one success

Retries quietly shrink margins

Failed calls still consume tokens

You do not need to overcomplicate this. Just make sure retries are tracked intentionally, and it should not be ignored.

Treating one user request as one model call

In simple apps, one request maps to one model call. In agent systems, that assumption breaks fast.

Agent workflows chain multiple LLM calls

Tool calls and reasoning steps multiply tokens

Billing per request often underestimates the true cost

If your model is one request equals one call, your billing model will drift from reality as your product becomes more autonomous.

Wrapping up

LLM tokens start as a technical metric, but once you ship an AI product, they quickly become the unit that connects model usage, infrastructure cost, and revenue.

Without proper metering, token consumption grows quietly in the background while pricing and billing struggle to keep up.

The solution is not just counting tokens. It’s building a pipeline where token usage is captured as events, attributed to the right customer or feature, and routed into a billing system that can aggregate, rate, and charge for it reliably.

That’s where tools like Flexprice come in. Instead of stitching together logs, batch jobs, and spreadsheets, you can treat tokens as a first-class billing metric.

If you’re building an AI product where token consumption drives cost and value, getting token metering right early will save you from painful reconciliation later. The best time to build that foundation is before usage scales and billing becomes the bottleneck.

How can I measure token consumption and enforce quotas to control AI operational costs?

How can we meter inference requests at the user and tenant level to enable precise billing for an AI-as-a-service platform?

What is the difference between token-based pricing and per-seat pricing for AI products?

How do credit-based pricing models work for AI and LLM products?

Why should I meter both input and output tokens instead of just tracking completions?